The Case Against Measuring Cycle Time

Why I recommend against using cycle time as a measure of engineering performance.

This is the latest issue of my newsletter. Each week I cover research, opinion, or practice in the field of developer productivity and experience. This week is an article I wrote about cycle time.

Many organizations use cycle time as a measure of their engineering performance. But this is something I recommend against.

One problem with using cycle time as a performance measure is that it is only useful in the extremes. Take, for example, a cloud service team that has an average cycle time of 3 months—reducing this team's cycle time is probably a good idea. Now instead, take a more typical team that has an average cycle time of 4 days. Would further optimizing cycle time provide any benefit?

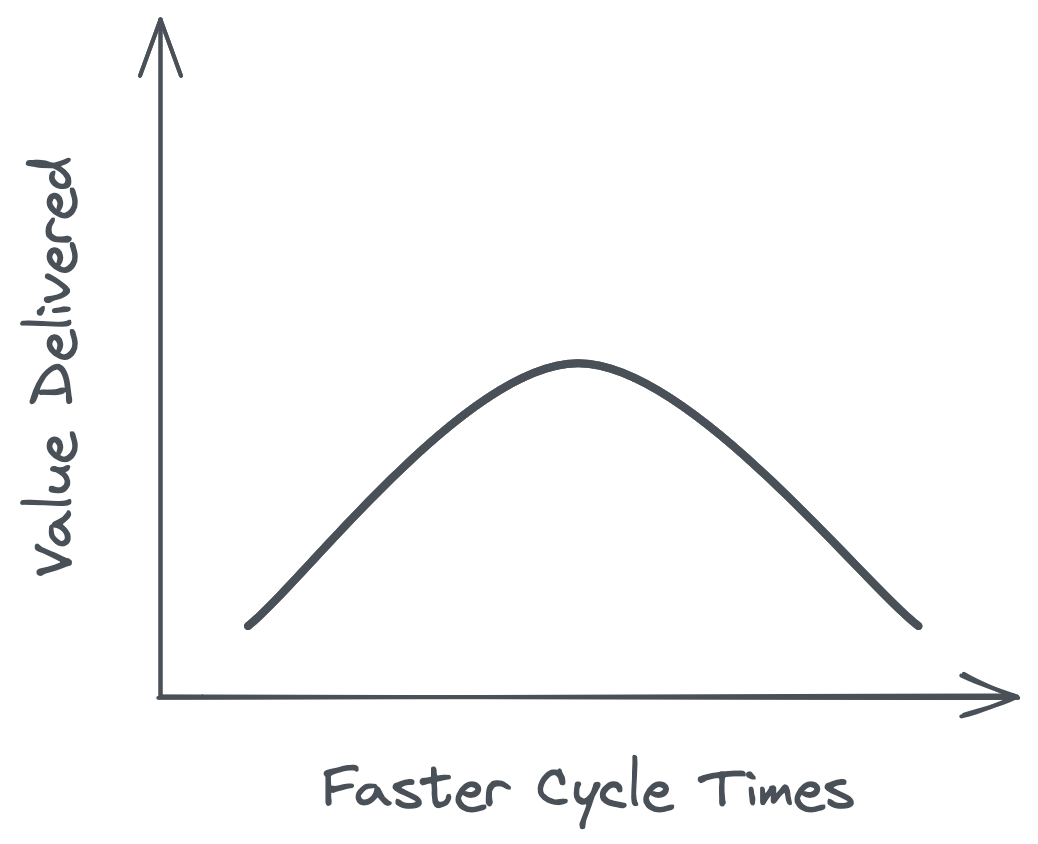

Although perpetually reducing cycle times may feel like improvement, if all you are doing is rearranging work into smaller pieces, then you are not increasing net value delivered to customers. To put it another way: delivering 2 units of software twice per week isn't necessarily better than delivering 4 units of software once per week, especially if breaking down work unnecessarily causes more toil for developers. We can visualize this effect using an inverted J curve:

Another problem with using cycle time as a performance measure is that it doesn't capture speed in the way leaders often intend. To use an analogy, in cycling, your speed is a function of cadence (how fast you pedal) multiplied by power (the amount of force in each pedal stroke). In software development, cycle time tells us the cadence of delivery, but not how much work gets delivered in each cycle (which is famously difficult to measure with software).

When leaders push teams to accelerate cycle times, teams may end up pedaling faster without actually delivering more. And when leaders compare cycle times across teams without factoring in batch size, they may derive false conclusions about teams' performance.

To sum it up: There are cases in which individual teams may find cycle time useful. However, using cycle time as a top-level performance measure that is pushed onto all teams is counterproductive. To actually improve performance, leaders should focus on measuring the friction experienced by developers and removing the bottlenecks that slow them down.

That’s it for this week! Share your thoughts and feedback anytime by replying to this email.

If this email was forwarded to you, subscribe here so you don’t miss any future posts:

-Abi

I think it's good to think about metrics for KPI's versus as a "pulse check" or "health check". Some metrics are meant to be maximized or minimized whereas others are just meant to be stabilized.

Cycle Time I think is a sort of pulse check. If you measure it and it makes a surprising move this might tell you there's something to investigate.

Another aspect to look is Cost Of Delay. Without knowing how much an organization will make by reducing cycle time and how much it costs to reduce cycle time etc, even if value is delivered over time, it would be mere out of luck. This is intelectually demeaning. Reinertsen briefly, explains how to calculate cost of delay in this video- https://www.youtube.com/watch?v=du2WV1IbULU. If an organization wishes to deliver more, but cannot, in other words, if their backlog queue is huge, it gives the reason to act and implement the same changes that they will do after measuring cycle time. Here is another video by Donald Reinertsen https://www.youtube.com/watch?v=KmvUyPDgquc describing cycle time vs queues. Also measuring cycle time systemically and at scale is not easy. So why spend time measuring, while there is a large queue.